A forensic report on Claude Desktop’s silent browser-bridge installation is the AI industry’s Flo moment. The same consent-stretch pattern femtech already learned, now playing out at the assistant layer.

Source: thatprivacyguy.com/blog/anthropic-spyware

When you install an app, you expect it to do what it said it would do. Nothing more.

A forensic report published April 18 by privacy researcher Alexander Hanff says Anthropic’s Claude Desktop, the macOS app for the company’s AI assistant, does considerably more. On installation, it silently configures seven of your browsers to give a future Anthropic browser extension access to whatever sites you’re logged into. The extension doesn’t exist in any documented form a user could review. The doors are already open.

The user was not asked.

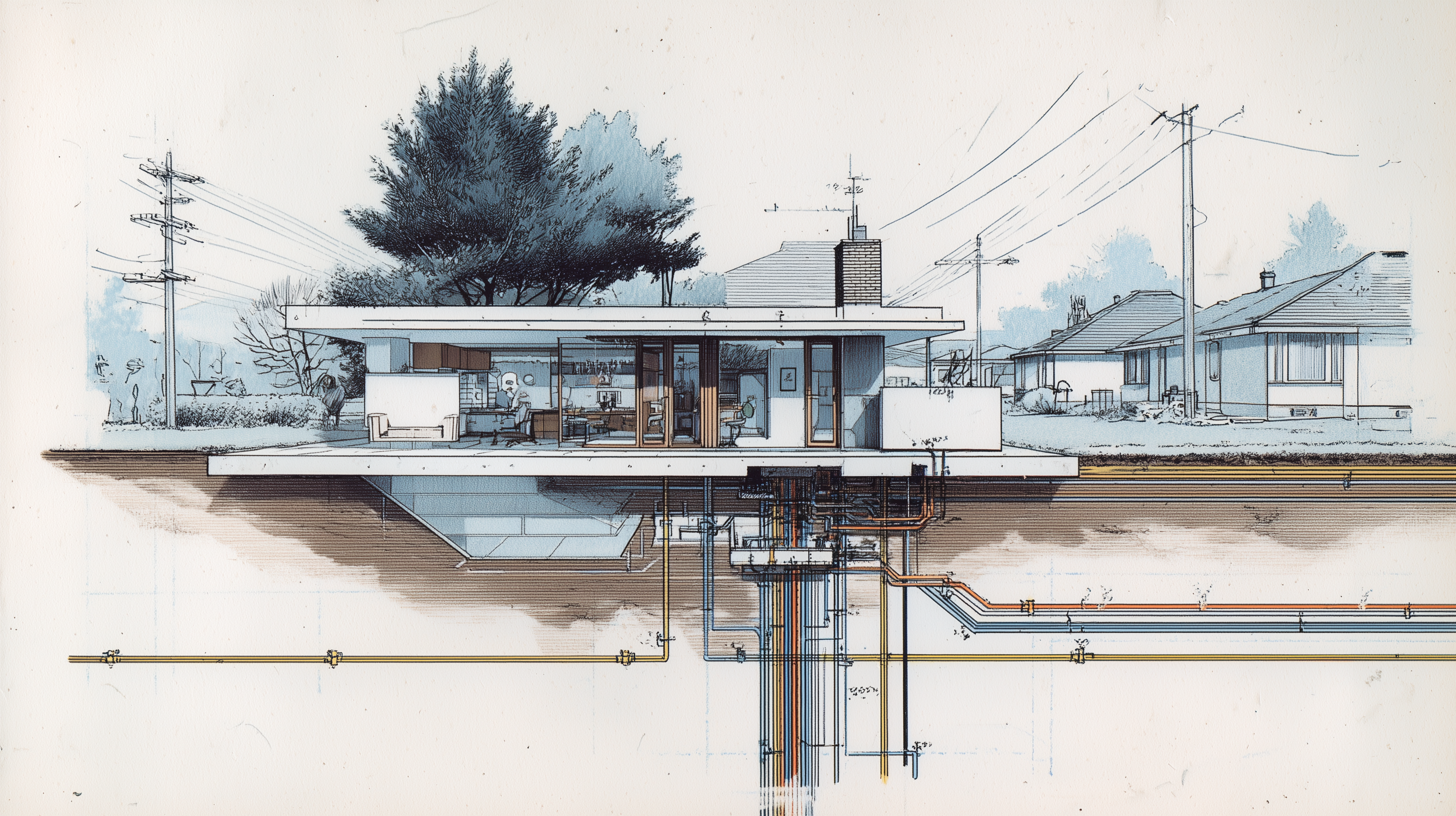

This is the architectural failure model I keep pointing at. Worth slowing down on, because the pattern is the same one wellness tech has been litigating for the last decade.

The Permission You Granted, and the Permission You Didn’t

You consented to install Claude Desktop.

You did not consent to a pre-authorized bridge from your browser’s authenticated sessions to a future Anthropic extension. You couldn’t have. The extensions don’t exist yet in any documented form a user could reason about. The manifest is the door. The door is open. The user who walked through the front door of “install this AI helper” did not see the seven side doors that opened automatically when they did.

This is the same move that produced the Flo verdict.

In that case, users “consented” to a privacy policy. They did not consent to embedded Meta SDKs receiving their cycle data, their sexual activity logs, their fertility intent. The policy was a mile wide; the architecture was a sieve. A San Francisco jury in August 2025 decided the gap between what users agreed to and what the system did was actionable, at up to $5,000 per violation against millions of users.

The Claude Desktop pattern, as the report describes it, is that gap encoded in code. The user agreed to the app. The app extended the user’s authority to bridges the user never saw.

This is what I mean when I say compliance is not privacy. A click-through can satisfy a regulator. It does not produce trust. Trust is what’s left when the architecture wouldn’t permit the surprise in the first place — and the user is certain about that.

The Receipts

This is not a thought experiment. The author of the report ran a forensic audit on his MacBook and reproduced the findings on a second machine. The trail is specific.

When you install Claude Desktop, the app silently writes identical configuration files into the directories of seven browsers: Chrome, Brave, Edge, Chromium, Arc, Vivaldi, and Opera. Several of those browsers were not installed on the test machine. Claude Desktop wrote them anyway, ready to activate the moment one of those browsers is ever installed.

Each file pre-authorizes three specific Anthropic-owned browser extension IDs to invoke a helper program that runs outside the browser’s protective sandbox at the user’s full privilege level. The helper is signed and notarized by Anthropic and shipped through Claude Desktop’s normal release channel. This is not a bug or a leftover from an internal build. It is intentional, production-grade infrastructure.

The macOS operating system stamps each file with a tamper-resistant signature identifying which application wrote it. The signature on every one of these files matches Claude Desktop’s own log file. Across two machines, the author counted 31 separate installation events. The files are rewritten on every launch. Deleting them does nothing. They reappear.

Anthropic’s published documentation states their browser integration supports Chrome and Edge, and is “not yet supported on Brave, Arc, or other Chromium-based browsers.” The shipped behavior installs into all of them, plus three more.

Once a paired extension activates the bridge, the documented capabilities include reading the user’s logged-in state on every site, reading the rendered contents of any page (the part HTTPS decrypts on the user’s screen, including private messages, mid-typed forms, and on-screen two-factor codes), and filling form fields. Anthropic’s own published research puts the prompt-injection success rate against this capability at 11.2% with current mitigations. That is roughly one in nine attempts by an attacker, using hidden instructions on a webpage, to hijack the assistant into doing something the user did not ask it to do.

The author argues, in his professional opinion as a privacy specialist, that this constitutes a direct breach of Article 5(3) of the EU ePrivacy Directive, which requires informed consent before storing or accessing information on a user’s device, alongside potential exposure under various computer access and misuse statutes.

I am not a lawyer. I am a founder who builds for an audience that has been on the wrong end of consent stretching before. What I will say is this: the legal argument is serious enough that the next move is not Anthropic’s marketing team’s to make. It belongs to their general counsel.

Why This Matters for Women’s Health

The browsers Claude Desktop writes into are not abstract. They are where women type the things they have not told anyone else.

The message to a gynecologist describing six months of bleeding she has not mentioned to her partner. The free-text field in a fertility portal asking how many cycles she has been trying, what the last loss looked like, what she is willing to do next. The pelvic pain diary kept in a browser tab. The postpartum depression screening. The mid-composed email to an oncologist she has not yet sent. The 2am search bar: is this normal?, am I overreacting?.

Authenticated browser sessions are where the most intimate health writing of midlife now lives, and most of what makes it intimate is the text itself. Not the metadata. Not the visit timestamp. The words.

The report quotes Anthropic’s own documentation: Claude “shares your browser’s login state, so it can access any site you’re already signed into,” with capabilities for “data extraction” and “form filling.”

That language describes a clinician’s worst-case scenario hiding inside a user’s productivity tool. A patient who tracks perimenopause symptoms in an app, books a hormone-therapy consult through a portal, and runs an AI assistant on the same laptop is not running three separate trust relationships. She is running one device, and the assistant has been pre-authorized to reach across all of it.

The threat model is not exotic. It is already in the room.

This is why we don’t ship products that depend on the user trusting a corporate policy not to do what the architecture quietly enables. It is why wellness data on Cirdia’s products is not retained in centralized servers in the first place. The architecture decides what is possible. The policy describes what is promised. Promises can be revised in the next version of the terms.

The Pattern, Named

Three signals to read this story by.

As a business signal: the AI industry is reaching the point wellness tech reached in 2021, when “we got consent” stopped being enough and juries, regulators, and users started asking what the user could actually have understood. The Flo verdict was the turning point for femtech, with statutory damages of up to $5,000 per violation under California’s Invasion of Privacy Act stacked across millions of users. The forensic finding here, if it holds up to scrutiny by other researchers, points at a comparable exposure surface for an AI vendor, with a parallel set of European levers in the ePrivacy Directive (Article 5(3)) the author cites. Either jurisdiction prices the same architectural choice into the billions. This is not a theoretical risk surface. It is a balance sheet.

As a product signal: users will feel this before they can articulate it. The unsettled feeling that something was done in their name, on their machine, that they did not authorize. They will not file lawsuits. They will quietly switch tools. The retention curve will bend. The product team will read the bend as a marketing problem and propose a campaign. It is not a marketing problem.

As an architectural requirement: the only response that holds up over time is to design systems where the pre-authorization cannot exist. Where “the AI helper extends your authority into seven browsers” is not a configuration option but a structural impossibility. Where what the user sees on the install screen is what the user gets, and nothing else.

What Founders Should Take From This

The AI tooling boom is repeating the surveillance-tech playbook at compressed timescale. The pattern of consent at point A used to license behavior at point B, the one that took the wellness industry a decade to litigate, is now playing out in months at the assistant layer.

For anyone building in health-adjacent AI, particularly anything touching women’s health: do not assume that because your product sits downstream of a model, the architectural choices upstream are someone else’s problem. Your users do not draw that line. They see one experience and one trust relationship. If the AI assistant on their laptop can read their browser-authenticated session with their fertility clinic, that is your product surface, whether or not your code wrote it.

The defensible position is to encode least authority at every layer you touch. Don’t bundle SDKs you can’t audit. Don’t depend on third-party assistants that pre-authorize themselves into your users’ authenticated sessions. Tell your users, in plain language, what your system can and cannot reach. Then build a system where the plain-language answer remains true after the next release.

The Agreement That Matters Most

A user who installs an AI assistant is not consenting to an architecture. They are consenting to an outcome, the help they came for. When the architecture extends past the outcome, the consent does not stretch with it.

The work of building wellness technology women can trust starts with refusing that stretch. Not because the law requires it, though increasingly it will. Because women have spent the last decade learning, from Flo and from a dozen quieter cases, that the policy and the system are not the same thing.

The policy can promise. Only the architecture can keep.

Source: “Anthropic secretly installs spyware when you install Claude Desktop,” thatprivacyguy.com, April 18, 2026.